Read Parquet File Pyspark

Read Parquet File Pyspark - Parquet is a columnar format that is supported by many other data processing systems. Web pyspark read parquet file into dataframe. I wrote the following codes. Optionalprimitivetype) → dataframe [source] ¶. Spark sql provides support for both reading and. From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc). Web i use the following two ways to read the parquet file: Web i want to read a parquet file with pyspark. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \.

Web pyspark read parquet file into dataframe. I wrote the following codes. From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Parquet is a columnar format that is supported by many other data processing systems. From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc). Web i want to read a parquet file with pyspark. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. Web i use the following two ways to read the parquet file: Optionalprimitivetype) → dataframe [source] ¶. Spark sql provides support for both reading and.

Web i want to read a parquet file with pyspark. I wrote the following codes. Web pyspark read parquet file into dataframe. From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc). From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Parquet is a columnar format that is supported by many other data processing systems. Web i use the following two ways to read the parquet file: Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. Spark sql provides support for both reading and. Optionalprimitivetype) → dataframe [source] ¶.

pd.read_parquet Read Parquet Files in Pandas • datagy

Web pyspark read parquet file into dataframe. Parquet is a columnar format that is supported by many other data processing systems. I wrote the following codes. From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Optionalprimitivetype) → dataframe [source] ¶.

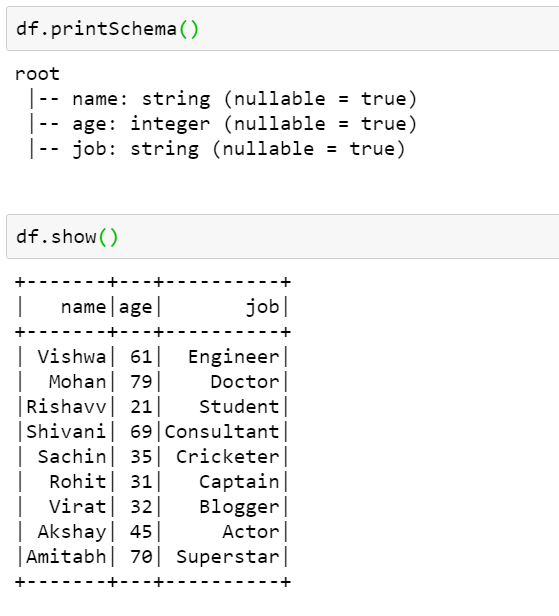

PySpark Tutorial 9 PySpark Read Parquet File PySpark with Python

Parquet is a columnar format that is supported by many other data processing systems. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. Optionalprimitivetype) → dataframe [source] ¶. From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc).

How to read a Parquet file using PySpark

I wrote the following codes. Web i use the following two ways to read the parquet file: From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. Web i want to read a parquet file with pyspark.

Python How To Load A Parquet File Into A Hive Table Using Spark Riset

From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Web pyspark read parquet file into dataframe. Parquet is a columnar format that is supported by many other data processing systems. Web i want to read a parquet file with pyspark. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe.

How To Read Various File Formats In Pyspark Json Parquet Orc Avro Www

Spark sql provides support for both reading and. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. Web pyspark read parquet file into dataframe. Optionalprimitivetype) → dataframe [source] ¶. I wrote the following codes.

How to resolve Parquet File issue

From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Optionalprimitivetype) → dataframe [source] ¶. Web pyspark read parquet file into dataframe. From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc). Spark sql provides support for both reading and.

How To Read A Parquet File Using Pyspark Vrogue

From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc). Parquet is a columnar format that is supported by many other data processing systems. Web i want to read a parquet file with pyspark. Web pyspark read parquet file into dataframe. Web i use the following two ways to read the parquet file:

PySpark Read and Write Parquet File Spark by {Examples}

Web pyspark read parquet file into dataframe. Optionalprimitivetype) → dataframe [source] ¶. Spark sql provides support for both reading and. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. Web i use the following two ways to read the parquet file:

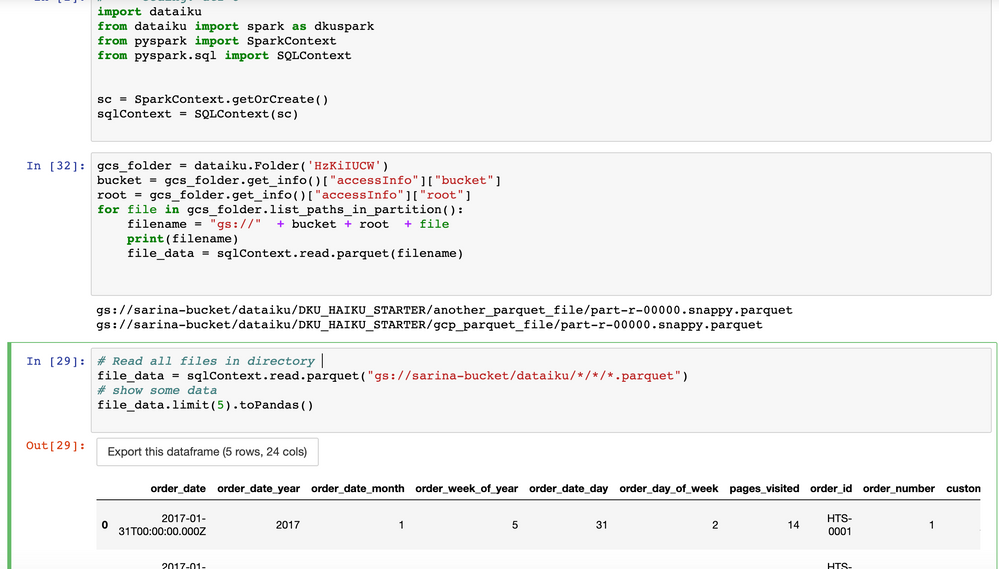

Solved How to read parquet file from GCS using pyspark? Dataiku

Web pyspark read parquet file into dataframe. From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Parquet is a columnar format that is supported by many other data processing systems. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc).

Read Parquet File In Pyspark Dataframe news room

I wrote the following codes. Web i want to read a parquet file with pyspark. Web pyspark read parquet file into dataframe. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \.

Optionalprimitivetype) → Dataframe [Source] ¶.

I wrote the following codes. Pyspark provides a parquet () method in dataframereader class to read the parquet file into dataframe. Spark sql provides support for both reading and. Web i want to read a parquet file with pyspark.

Web Pyspark Read Parquet File Into Dataframe.

Web i use the following two ways to read the parquet file: From pyspark.sql import sparksession spark = sparksession.builder \.master('local') \. Parquet is a columnar format that is supported by many other data processing systems. From pyspark.sql import sqlcontext sqlcontext = sqlcontext(sc).